“Another Body” & the Disturbing Rise of Deepfakes

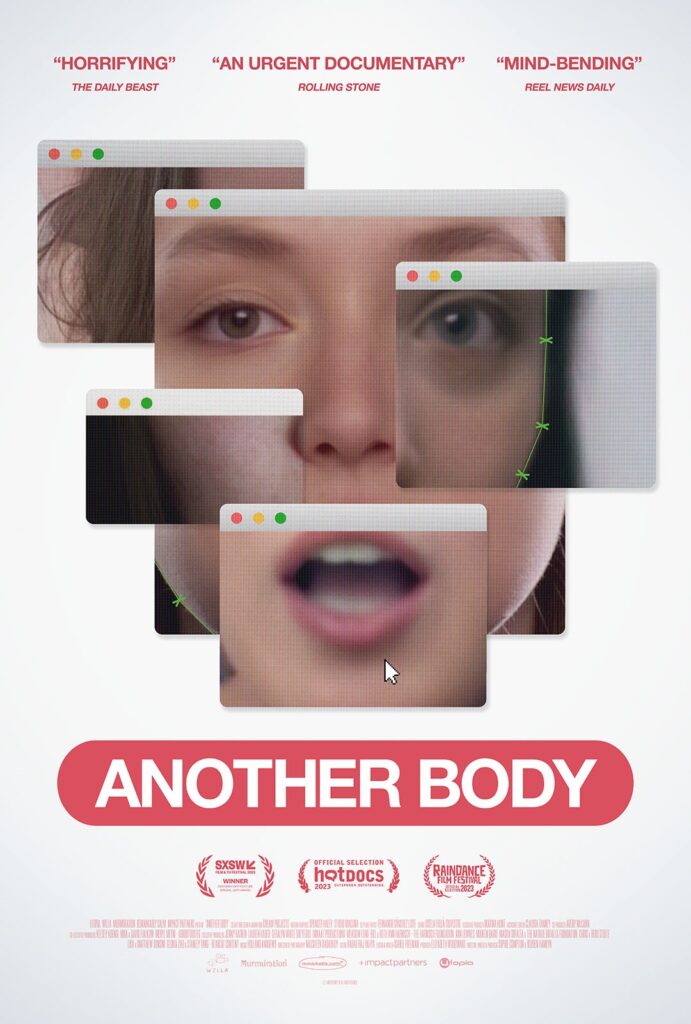

The recent documentary “Another Body” provides a disturbing look into the real-world impacts of deepfake technology — specifically, how it is being used to create nonconsensual fake pornography.

Directed, written, and produced by Sophie Compton and Reubyn Hamlyn, the film, which had its world premiere at South By Southwest this year, chronicles a young woman named Taylor who discovers that someone has used A.I. technology and photos from her social media profiles to create realistic fake pornography featuring her face.

Taylor describes the psychological devastation of finding herself violated through these videos. She discusses feeling unsafe knowing the deepfakes could help strangers track her down in real life. And despite police reports, she learns there are few laws actually banning this nonconsensual objectification.

What’s more, as the film shows, another friend of Taylor’s from college has suffered the same fate. Then, in a dramatic twist (spoiler alert!), the filmmakers reveal that Taylor and the other subjects of the film have themselves been deepfaked — consensually and using the same technology that was used maliciously — to protect their identities while sharing their stories and to showcase the technology’s capabilities for both good and bad actors.

As Compton explained:

“It was really important to us that everything we anonymized was just like it happened and we only changed the details that were identifying. We wanted to reflect the exact truth and the spirit of the story.”

Through Taylor’s story, “Another Body” exposes deepfakes as the latest evolution of technology being used to harass and subjugate women. This dark and deeply troubling reality is undoubtedly among the most harmful issues posed by deepfakes.

But it is not the only one. As A.I. continues advancing rapidly, deepfakes have the potential to undermine truth and trust in all realms of society.

Let’s explore the topic in more depth.

What are deepfakes, and how do they work?

Deepfakes leverage powerful A.I. techniques to manipulate or generate fake video, audio, and imagery, or fabricate media by digitally impersonating people’s faces, voices, and motions. These are made without the person’s permission and are created for nefarious purposes.

While photo and video manipulation has existed for decades, deepfakes take it to an unprecedented level, generating strikingly convincing forged footage that can sometimes even hold up against professional scrutiny.

This is possible due to recent breakthroughs in deep learning algorithms.

The “deep” in deepfakes refers to deep learning. This advanced form of machine learning uses neural networks modeled after the architecture of the human brain. By processing massive datasets, deep learning algorithms can analyze patterns and “learn” complex tasks like computer vision and speech recognition.

Specifically, networks analyze images and/or videos of a target person to extract key information about their facial expressions. Using this data, the algorithm can reconstruct their face, mimic their facial expressions, and match mouth shapes.

This enables realistic face swapping.

So, if Person A is deepfaked, and Person B is the new video source, the algorithm can convincingly depict Person A’s face doing whatever Person B is doing.

The results can appear remarkably life-like.

The term “deepfake” itself comes from 2017 when a Redditor calling themselves “deepfakes” shared pornography featuring celebrity’s faces swapped onto adult videos using artificial intelligence

The increasing realism quickly led Reddit to ban the community, but the momentum was already unstoppable. Around 2018, consumer apps brought deepfake creation to the masses. As algorithms improved, users could produce fabricated videos, voices, and images from home PCs in minutes.

In parallel, developers built deepfake tools for gaming, film production, virtual assistants, and training content. Meanwhile, political usage emerged globally in parody videos and propaganda.

Experts estimate at least 500,000 video and voice deepfakes have circulated just on social media — and just this year — alone. As the quality of the outputs continues to advance rapidly, many deepfakes now pass the “naked eye test,” making them all bust indistinguishable from reality without forensic analysis.

The scale of this volatile technology presents an unprecedented risk for safety, geopolitics, and truth itself if left unchecked.

Potential Threats of Deepfakes

Beyond personal violations like those exposed in “Another Body,” deepfakes threaten wide-ranging harms:

Disinformation Wars

Manipulated videos and imagery could convincingly depict political leaders declaring election tampering, demanding violence from supporters, using slurs, or any number of dangerous scenarios. Deepfakes could help sway tightly contested races or erode trust in government institutions over time.

For example, in 2020 a Nancy Pelosi video that was manipulated with traditional editing techniques to slow down her speech and make her appear inebriated quickly went viral on social media and impacted the news cycle.

As another real-world example, in our interview with Andy Parsons, head of Adobe’s Content Authenticity Initiative, Andy also discussed how Trump’s Access Hollywood tape might have been discredited through accusations of fakery if the technology had existed them.

Financial Crime

A.I. voice cloning services already enable fraudsters to imitate executives and scam companies. As the tech advances, doctored footage may trick more victims or simulate in-person identity theft. Forged media could also manipulate stock prices or cryptocurrency values.

Escalating Global Conflicts

Distributed deepfakes posing as citizen journalism could show fake atrocities committed by one nation to spark reprisal attacks from adversaries. Even proven fakes could worsen tensions. This risk intensifies as tensions flare between nuclear-armed states.

Undermining Truth Itself

Widespread synthetic media poses threats to truth and reality that we’re only beginning to fathom. Experts warn it may fuel widespread cynicism and doubt in all video evidence. People could start questioning whether major events even occurred or rejecting inconvenient truths as “deepfakes.”

In our interview with artistic director and co-CEO of Ars Electronica Gerfried Stocker, Gerfried discusses this in context with manufactured realities.

During the ongoing war between Israel and Hamas, misinformation and disinformation are playing a role in stoking emotions and attempting to sow division online. While most of this so far has not been deepfaked, some very realistic fake imagery has appeared.

The existence of deepfake technology also creates another insidious issue: Muddying the water so that observers doubt even real photos and videos.

The potential for deepfakes to further disrupt this and other future conflicts and global events is deeply concerning.

Additionally, with the 2024 elections in the U.S. and 39 other countries nearing, officials are extremely worried about what havoc deepfakes could wreak.

Bad actors could leverage deepfakes to erode public trust, turn groups against each other, and undermine credibility in the results. And with political polarization and diminishing trust in institutions already high, this misinformation could tip society over the edge.

Fighting Back Against Deepfakes

So what can be done against deepfakes spreading chaos, psychological abuse, and disinformation? The movement towards legal solutions seems slow amid debates around free speech and exactly what constitutes unacceptable manipulated media. Some countries have begun introducing restrictions, but global coordination remains poor.

On the technology front, researchers are locked in an “arms race” trying to improve deepfake generation versus deepfake detection. But so far humans seem better than algorithms at spotting fakes.

The Content Authenticity Initiative, a community of media and tech companies, NGOs, academics, and others working to promote the adoption of an open industry standard for content authenticity and provenance, recently introduced a Content Credentials “icon of transparency,” a mark that will provide creators, marketers, and consumers around the world with the signal of trustworthy digital content.

Legislative solutions are also being introduced, both state by state and in the federal government. If passed, bills such as Representative Yvette Clarke’s DEEPFAKES Accountability Act would seek to protect individuals nationwide from deepfakes by criminalizing such materials and providing prosecutors, regulators, and particularly victims with resources.

While there is no national legislation specifically addressing deepfakes at this time, and aspects of Clarke’s bill in particular and government regulation of A.I., in general, remain under debate, tech companies and elected officials are generally aligned in their concerns about the dangers of leaving deepfakes unchecked.

Social context also aids in detecting deepfaked media.

If a public figure appears to be doing something wildly out of character or illogical, skepticism is warranted. Relying on trusted authorities and fact-checkers can also help confirm or debunk dubious media.

Ultimately we all must approach online content with sharpened critical thinking skills. Assume everything is suspect, check sources, and dig deeper before reacting or spreading.

Our individual actions online will decide whether truth drowns or stays afloat.

Here are some tips for identifying possible deepfakes yourself:

- Look around mouth/teeth – strange textures may indicate manipulated imagery

- Check lighting/shadows for inconsistencies

- See if reflections in eyes/glasses seem accurate

- Notice any jittery motion, especially in face/head area

- Watch background details for artifacts or blurriness

- Listen for odd cadence/tone/quality in audio

And most importantly — verify using outside trusted sources before believing!

Staying Safe Online in 2023

The internet is a treacherous place these days. Between risks of fraud by voice deepfakes, nonconsensual porn videos featuring our faces, and mass manipulation through misinformation and disinformation, pitfalls abound.

Yet, there are still some precautions you can take to minimize your risk exposure.

Start by limiting personal information shared publicly online or with strangers. Be sparing about revealing locations, family details, ID documents, or other sensitive materials. Lock down social media privacy settings and remove old posts that could poorly represent you now. Secondly, establish multifactor authentication everywhere possible to protect accounts from unauthorized access. Use randomly generated passwords unique to each site stored in a reputable password manager.

When encountering dubious claims, photos, or videos online, remember to verify using outside authoritative sources before circulating or reacting. Seek out high-quality journalism to stay accurately informed rather than hyperpartisan outrage bait. Fact-checking sites can assess the credibility of viral stories.

If confronted with deepfake pornography or identity theft, immediately document evidence and file reports. Sadly, local authorities lack resources around high-tech crimes but may assist with removing content under certain laws or referring cases to investigators. Reach out to supportive communities dealing with online exploitation as well.

Finally, in the latest Creativity Squared interview, Head of Online Safety at London-based digital transformation partner PUBLIC Maya Daver-Massion mentioned organizations like the National Center for Missing and Exploited Children, which offers resources for parents and age-appropriate learning materials for kids about staying safe online.

She also discussed the Internet Watch Foundation, another organization on the front lines helping companies purge child sexual abuse materials (CSAM) from their servers and assisting law enforcement prosecute those who traffic in CSAM. U.K.-based Parent Zone has also developed a tool with Google called “Be Internet Legends” for 7-11 year olds which gamifies the online safety learning experience.

Simultaneously, other platforms are working to label and remove harmful synthetic content, government agencies are striving to improve deepfake detection, and legal experts are prototyping laws against harmful generative media.

Some consumer apps even empower users to watermark and certify their videos.

As “Another Body” demonstrates, the threats are daunting. However, through ethical engineering practices, projects like the Content Authenticity Initiative, updated policies, legal consequences, and our own human critical thinking abilities (we do still have those, even in 2023!), there remains hope for a digital landscape aligned with truth over deceit and creating a safe online environment for all.

I loved you even more than you’ll say here. The picture is nice and your writing is stylish, but you read it quickly. I think you should give it another chance soon. I’ll likely do that again and again if you keep this walk safe.

Comment by Puravive Reviews — January 6, 2024 @ 9:10 pm

I have been surfing online more than 3 hours today, yet I never found any interesting article like yours. It is pretty worth enough for me. In my opinion, if all web owners and bloggers made good content as you did, the web will be much more useful than ever before.

Comment by temp mail — January 12, 2024 @ 12:02 am

I loved even more than you will get done right here. The overall look is nice, and the writing is stylish, but there’s something off about the way you write that makes me think that you should be careful what you say next. I will definitely be back again and again if you protect this hike.

Comment by puravive reviews complaints — January 27, 2024 @ 6:56 pm

Мадонна, икона поп-музыки и культурного влияния, продолжает вдохновлять и поражать своей музыкой и стилем. Её карьера олицетворяет смелость, инновации и постоянное стремление к самовыражению. Среди её лучших песен можно выделить “Like a Prayer”, “Vogue”, “Material Girl”, “Into the Groove” и “Hung Up”. Эти треки не только доминировали на музыкальных чартах, но и оставили неизгладимый след в культурной и исторической панораме музыки. Мадонна не только певица, но и икона стиля, актриса и предприниматель, чье влияние простирается далеко за рамки музыкальной индустрии. Скачать mp3 музыку 2024 года и слушать онлайн бесплатно.

Comment by Celia3803 — February 14, 2024 @ 7:33 pm

very informative articles or reviews at this time.

Comment by GlucoRelief reviews and complaints — February 18, 2024 @ 2:47 pm

Your article helped me a lot, is there any more related content? Thanks!

Comment by 注册Binance — April 13, 2024 @ 8:48 pm

Your point of view caught my eye and was very interesting. Thanks. I have a question for you.

Comment by binance-ны алдым-ау бонусы — April 22, 2024 @ 12:37 am

Thank you for your sharing. I am worried that I lack creative ideas. It is your article that makes me full of hope. Thank you. But, I have a question, can you help me?

Comment by binance Register — October 23, 2024 @ 9:35 am

Thanks for sharing. I read many of your blog posts, cool, your blog is very good.

Comment by 注册获取100 USDT — October 30, 2024 @ 8:19 am

Can you be more specific about the content of your article? After reading it, I still have some doubts. Hope you can help me.

Comment by Binance代码 — November 11, 2024 @ 5:46 am

I don’t think the title of your article matches the content lol. Just kidding, mainly because I had some doubts after reading the article.

Comment by 创建免费账户 — December 1, 2024 @ 11:00 pm

Your article helped me a lot, is there any more related content? Thanks!

Comment by www.binance.com — January 25, 2025 @ 9:12 am

Your point of view caught my eye and was very interesting. Thanks. I have a question for you.

Comment by registro na binance — April 19, 2025 @ 7:13 am

What an incredibly insightful take on the issues at hand! It truly prompts reflection on the broader implications that are often overlooked. Your unique perspective adds immense value to the discussion.

Comment by Forum Backlink Exchange — June 2, 2025 @ 2:33 am

I don’t think the title of your article matches the content lol. Just kidding, mainly because I had some doubts after reading the article.

Comment by 100 USDT almak icin kaydolun. — June 7, 2025 @ 2:11 am

Makaleniz açıklayıcı yararlı anlaşılır olmuş ellerinize sağlık

Comment by Bangla khelar Khobor — June 8, 2025 @ 4:25 pm

Daha önce araştırıp pek Türkçe kaynak bulamadığım sorundu Elinize sağlık eminim arayan çok kişi vardır.

Comment by Bangla Sprots News — June 8, 2025 @ 4:34 pm

This is really interesting, You’re a very skilled blogger. I’ve joined your feed and look forward to seeking more of your magnificent post. Also, I’ve shared your site in my social networks!

Comment by Giovanni Armstrong — September 30, 2025 @ 9:18 pm

This is really interesting, You’re a very skilled blogger. I’ve joined your feed and look forward to seeking more of your magnificent post. Also, I’ve shared your site in my social networks!

Comment by Rylee Fischer — October 11, 2025 @ 3:42 pm

Really appreciated this piece from an international trade perspective

Comment by Custom printed hessian bags exporter — February 7, 2026 @ 5:50 am

Çok yararlı bir makale olmuş. Severek takip ediyorum. Teşekkür ederim.

Comment by rajatoto — March 5, 2026 @ 9:01 am

Awesome! Its genuinely remarkable post, I have got much clear idea regarding from this post

Comment by Harold Hardin — March 16, 2026 @ 6:38 am

潜入深海,探索全新的海洋生物。 https://minecrafts.pythonanywhere.com

Comment by Heidy White — March 17, 2026 @ 11:49 pm

This is really interesting, You’re a very skilled blogger. I’ve joined your feed and look forward to seeking more of your magnificent post. Also, I’ve shared your site in my social networks!

Comment by Valeria Valenzuela — March 21, 2026 @ 9:06 pm

Lossless Scaling 3.2: Your Ticket to Flawless Gaming https://lsfg.netlify.app

Comment by Arthur Mcclure — March 29, 2026 @ 9:52 am

Download Lossless Scaling 3.2 and Change How You Game https://losslessscaling.surge.sh

Comment by Kaiden Wolfe — April 5, 2026 @ 12:13 pm

Download Patch and Update Stay Current 2026 https://softwares.pythonanywhere.com

Comment by Carlee Norman — April 6, 2026 @ 6:56 am

Download Lossless Scaling 3.2 and Enjoy Fluid Gaming https://speedpc.netlify.app

Comment by Danielle Gates — April 7, 2026 @ 2:50 pm

Download for PC Easy Installation 2026 https://www.indiexpo.net/en/games/cute-pong/download_links/14972/download

Comment by Denzel Huber — April 9, 2026 @ 1:59 am

Enhance Your Graphics Instantly with Lossless Scaling 3.2 https://speedpc.netlify.app

Comment by Alice Greer — April 9, 2026 @ 7:09 pm

Download Lossless Scaling 3.2 for a Butter-Smooth Experience https://lsfg.pythonanywhere.com

Comment by Jaeden Cooley — April 11, 2026 @ 10:26 pm

Mining Communities on Discord and Telegram https://web3miners.netlify.app

Comment by Landyn Dawson — April 14, 2026 @ 8:22 pm

Impressive work here

Comment by Kindery — April 18, 2026 @ 9:14 am

An easy read.

Comment by çağlar turgut — April 28, 2026 @ 7:04 pm