Truth in the Balance: The Critical Role of Media Literacy Education in the Age of Algorithms & GenAI

Over the past seven years, social media and the engagement economy created the conditions for the “post-truth” era in the global information ecosystem, where truth is a popularity contest enabled and amplified by algorithms. Artificial intelligence now threatens to break the system completely, where anyone can fabricate evidence of anything to further their agenda.

Despite the harms that society has already endured, digital citizens are unprepared for the onslaught of disinformation that experts expect to come. More so than ever, academic institutions and governments need to prioritize media literacy education to protect societies from chaos.

Disinformation is Pervasive, and We’re Unprepared for More

Propagators of mis- and disinformation employed generative artificial intelligence to sow division, discredit political opponents, or manipulate public opinion in at least 16 countries over the past year, according to the 2023 annual Freedom of the Net Report by Freedom House.

Manipulated audio of President Joe Biden appearing to make transphobic remarks circulated on social media. Donald Trump and Dr. Anthony Fauci were also victims of digital imagery manipulated to look like the two were embracing each other. In Venezuela earlier this year, state media outlets distributed deepfaked videos of nonexistent anchors on a fabricated news channel spreading propaganda.

These are only a few glaring examples of how A.I. is deteriorating the quality of the global information ecosystem, though the issue is much more granular. In a survey of 10,000 British and American adults, half of respondents said they encounter false or misleading content weekly. Even Freedom House acknowledges that their report likely undercounts instances of A.I.-generated mis- and disinformation campaigns.

Almost 60 percent of Americans think that A.I. will increase the spread of misinformation during the 2024 U.S. presidential election, according to new polling by The Associated Press.

Efforts such as Adobe’s Content Authenticity Initiative (CAI) aim to eliminate the guesswork in identifying images that are generated or manipulated by artificial intelligence. In episode seven of Creativity Squared, CAI’s Senior Director, Andy Parsons, discusses the importance of making sure content authenticity is verifiable and the technology they’re developing to achieve that.

Hardware solutions for image authenticity are coming to the market as well. This week’s episode of Creativity Squared features the Vice President of Marketing at Leica Camera North America (Leica is also a member of the Content Authenticity Initiative), Kiran Karnani, who discusses the iconic brand’s newest offering: the world’s first camera that automatically assigns content credentials for every photo it captures.

However, the rapid pace of A.I. development and ease of access means that trying to stem the tide of harmful A.I.-generated content may forever be a game of whack-a-mole.

At the other end of the information superhighway, media consumers need to be better equipped to evaluate the content they encounter. A Poynter survey found that 75 percent of respondents were not confident they could identify online misinformation. In fact, almost two-thirds of American adults’ academic careers included no media literacy training whatsoever, according to a 2022 study by the nonprofit Media Literacy Now.

Today’s youth are not necessarily better prepared. In a 2016 study, the Stanford History Education Group determined that college-age and younger “digital natives” could often not discern between ads and news articles or identify clear signs of political bias.

The study’s authors said, “Overall, young people’s ability to reason about the information on the internet can be summed up in one word: bleak.”

Still today, only three U.S. states mandate media literacy education in K-12 schooling, even as teens increasingly name “other people on TikTok and Instagram” as their primary news sources. A nationwide survey of 18 to 24-year-old Canadians this year found that 84 percent were unsure that they could distinguish true from false content on social media, and 73 percent said they follow at least one social media influencer who expressed antiscience views.

Countering the Content Confidence Crisis

Public policy, academia, and nonprofit groups are developing answers for our crisis of confidence in online content.

The News Literacy Project (NLP) is a nonpartisan nonprofit focused on increasing citizens’ ability to determine the credibility of news and other information and to recognize the standards of fact-based journalism to know what to trust, share, and act on. The project offers resources for educators, as well as webinars and a podcast dedicated to news literacy.

NLP and other groups like Media Literacy Now (MLN), a grassroots educational policy advocacy group, are mobilizing communities to push their policymakers for mandatory media literacy education. According to MLN’s 2022 Media Literacy Policy Report, bipartisan cooperation is growing around the need to do more.

Last month, Governor Gavin Newsom signed a bill to make California the fourth U.S. state to mandate K-12 media literacy training. However, there’s been little momentum in Congress for a bill introduced last year to establish federal funding for media literacy programs in local schools.

Companies, nonprofit groups, and think tanks are trying to pick up the slack by developing media literacy curricula for schools to implement for free.

Adobe’s Content Authenticity Initiative offers media literacy curricula for college, high school, and middle school through the company’s education exchange. Course topics include “The Basics of Media Literacy” for middle schoolers and “Visual Literacy” for higher ed students.

Each curriculum includes a foundational unit as well as lessons for use in social studies, the arts, and English & language arts (ELA), with media literacy lessons and themes integrated throughout all components.

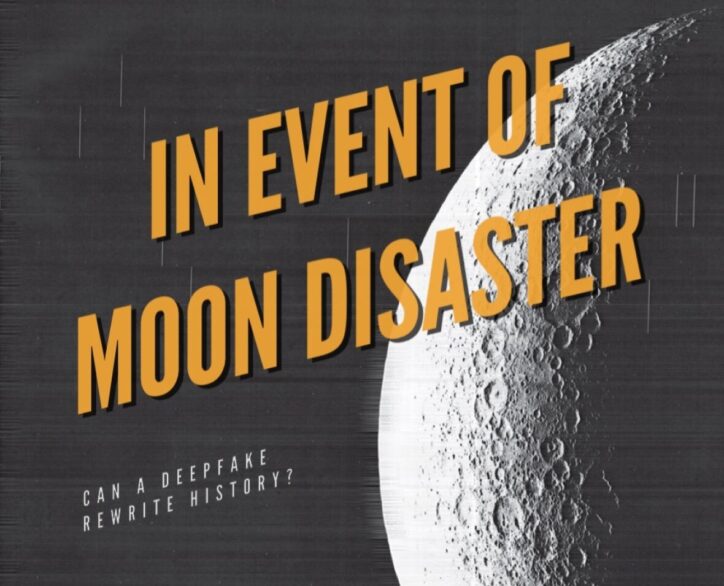

The MIT Center for Advanced Virtuality (CAV) offers a free online mini-course on “Media Literacy in the Age of Deepfakes,” which offers tips and case studies for thinking critically about misinformation and a brief history of how our media landscape arrived here. The third and final module uses CAV’s multi-platform, immersive project, In Event of Moon Disaster, to explore the implications of deepfake technology by inventing a historical timeline where (an A.I.-generated clone of) Richard Nixon addresses the nation following the failure of the Apollo 11 mission.

Regulation and Education: Fighting Disinformation Holistically

It’s not enough for schools to teach students how to use technology without also teaching the risks. However, funding determines focus. Surveys show that adults are not inherently more or less capable of discerning mis- and disinformation than young people, so time and effort has to go into training teachers to prepare students to enter a radically different media environment than earlier generations encountered.

To accomplish that fairly and equitably, Congress needs to heed the call of advocacy groups and dedicate resources to steeling Americans against the harms of malicious disinformation.

While ongoing efforts to regulate the cutting edge of the A.I. industry are worthwhile, a holistic approach to fortifying the social fabric should also encourage a culture of reasonable skepticism across all age groups.

If leaders continue to neglect the need to strengthen media literacy, no amount of regulation will prevent future instances of A.I.-enabled mass deception and social unrest.

It was great seeing how much work you put into it. Even though the design is nice and the writing is stylish, you seem to be having trouble with it. I think you should really try sending the next article. I’ll definitely be back for more of the same if you protect this hike.

Comment by Temp Mail — January 22, 2024 @ 12:04 am

PLEASE HELP ME

My name is Aziz Badawi, I’m 27 year old man from Palestine. Our town has recently evacuated due

to bombardments by Israel, now I am staying at a shelter with my 6 year old daughter Nadia. My wife is

dead and I’m the only one left to take care of my daughter as we are not allowed to depart to my parents house

in Nablus, she is sick with a congenital heart defect and I have no way to afford the medicine she needs anymore.

People here at the shelter are much in the same conditions as ourselves…

I’m looking for a kind soul who can help us with a donation, any amount will help even 1$ we will

save money to get her medicine, the doctor at the shelter said if I can’t find a way to get her the

medication she needs her little heart may give out during the coming weeks.

If you wish to help me and my daughter please make any amount donation in bitcoin cryptocurrency

to this bitcoin wallet: bc1qcfh092j2alhswg8jr7fr7ky2sd7qr465zdsrh8

If you cannot donate please forward this message to your friends, thank you to everyone who’s helping me and my daughter.

Comment by aziz — February 2, 2024 @ 11:03 am

I became a regular on this fantastic website a few days back, they offer wonderful content for members. The site owner excels at providing value to the community. I’m delighted and hope they persist in their magnificent efforts!

Comment by Temp mail — February 12, 2024 @ 1:01 pm

Thanks for sharing. I read many of your blog posts, cool, your blog is very good.

Comment by binance開戶 — June 21, 2024 @ 9:24 pm

Your point of view caught my eye and was very interesting. Thanks. I have a question for you.

Comment by binance bonus za prijavo — August 13, 2024 @ 12:41 pm

I don’t think the title of your article matches the content lol. Just kidding, mainly because I had some doubts after reading the article.

Comment by free binance account — August 31, 2024 @ 10:14 am

Can you be more specific about the content of your article? After reading it, I still have some doubts. Hope you can help me.

Comment by 100 USDT — December 3, 2024 @ 7:57 am

Can you be more specific about the content of your article? After reading it, I still have some doubts. Hope you can help me.

Comment by create binance account — December 5, 2024 @ 6:17 am

Thanks for sharing. I read many of your blog posts, cool, your blog is very good.

Comment by Skapa ett gratis konto — December 18, 2024 @ 10:36 pm

I don’t think the title of your article matches the content lol. Just kidding, mainly because I had some doubts after reading the article.

Comment by бнанс код — December 29, 2024 @ 12:13 pm

Thank you for your sharing. I am worried that I lack creative ideas. It is your article that makes me full of hope. Thank you. But, I have a question, can you help me?

Comment by Akun Binance Gratis — May 18, 2025 @ 9:28 pm

Can you be more specific about the content of your article? After reading it, I still have some doubts. Hope you can help me.

Comment by bonus di riferimento binance — June 12, 2025 @ 5:31 pm

Thanks for sharing. I read many of your blog posts, cool, your blog is very good. https://www.binance.info/bg/register?ref=V2H9AFPY

Comment by binance account creation — August 25, 2025 @ 12:31 pm